This post was written as a how-to guide for the capsul cloud compute provider by cyberia.club!

SSH is a very old tool, created back when the internet was a different place, with different use cases and concerns. In many ways, the protocol has failed to evolve to meet the needs of our 21st century global internet. Instead, the users of SSH (tech heads, sysadmins, etc) have had to evolve our processes to work around SSH's limitations.

These days, we use SSH + public-key cryptography to establish secure connections to our servers. If you are not familiar with the concept of public key cryptography, cryptographic signatures, or diffie-hellman key exchange, you may wish to see the wikipedia article for a refresher.

Public Key Crypto and Key Exchange: The TL;DR

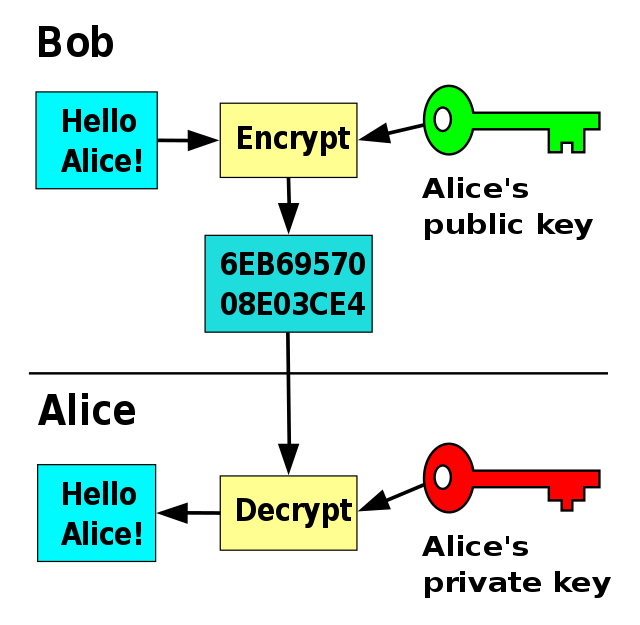

Computers can generate "key pairs" which consist of a public key and a private key. Given a public key pair A:

- A computer which has access to public key A can encrypt data, and then ONLY a computer which has access private key A can decrypt & read it

- Likewise, a computer which has access to private key A can encrypt data, and any a computer which has access public key A can decrypt it, thus PROVING the message must have come from someone who posesses private key A

Key exchange is a process in which two computers, Computer A and Computer B (often referred to as Alice and Bob)

both create key pairs, so you have key pair A and

key pair B, for a total of 4 keys:

- public key A

- private key A

- public key B

- private key B

- computer A sends computer B its public key

- computer B sends computer A its public key

- computer A sends computer B a message which is encrypted with computer B's public key

- computer B sends computer A a message which is encrypted with computer A's public key

The way this process is carried out allows A and B to communicate with each-other securely, which is great,

HOWEVER, there is a catch!!

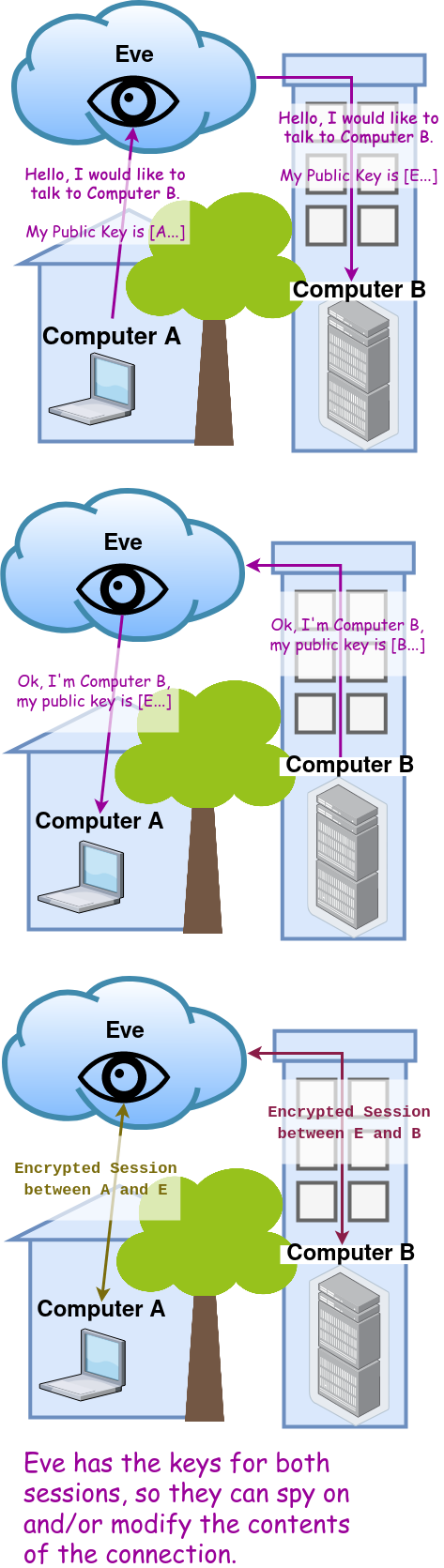

When computers A and B are trying to establish a secure connection for the first time, we assume that the way they communicate right now is NOT secure. That means that someone on the network between A and B can read & modify all messages they send to each-other! You might be able to see where this is heading...

When computer A sends its public key to computer B, someone in the middle (lets call it computer E, or Eve) could record that message, save it, and then replace it with a forged message to computer B containing public key E (from a key pair that computer E generated).

If this happens, when computer B sends an encrypted message to computer A,

B thinks that A's public key is actually public key E, so it will use public key E to encrypt.

And again, computer E in the middle can intercept the message, and they can decrypt it as well

because they have private key E.

Finally, they can relay the same message to computer A, this time encrypted with computer A's public key.

This is called a Man In The Middle (MITM) attack.

Without some additional verification method, Computer A AND Computer B can both be duped and the connection is NOT really secure.

Authenticating Public Keys: A Tale of Two Protocols

Now that we have seen how key exhange works, and we understand that in order to prevent MITM attacks, all participants have to have a way of knowing whether a given public key is authentic or not, I can explain what I meant when I said

[SSH] has failed to evolve to meet the needs of our 21st century global internet

In order to explain this, let's first look at how a different, more modern protocol, Transport Layer Security (or TLS) solved this problem. TLS, (still sometimes called by its olde name "Secure Sockets Layer", or SSL) was created to enable HTTPS, to allow internet users to log into web sites securely and purchase things online by entering their credit card number. Of course, this required security that actually works; if someone could MITM attack the connection, they could easily steal tons of credit card numbers and passwords.

In order to enable this, a new standard called X.509 was created. X.509 dictates the data format of certificates and keys (public keys and private keys), and it also defines a simple and easy way to determine whether a given certificate (public key) is authentic. X.509 introduced the concept of a Certificate Authority, or CA. These CAs were supposed to be bank-like public institutions of power which everyone could trust. The CA would create a key pair on an extremely secure computer, and then a CA Certificate (the public side of that key pair) would be distributed along with every copy of Windows, Mac OS, and Linux. Then folks who wanted to run a secure web server could generate thier OWN key pair for thier web server, and pay the CA to sign thier web server's X.509 certificate (public key) with the highly protected CA private key. Critically, issue date, expiration date, and the domain name of the web server, like foo.example.com, would have to be included in the x.509 certiciate along with the public key. This way, when the user types https://foo.example.com into thier web browser:

- The web browser sends a TLS ClientHello request to the server

-

The server responds with a ServerHello & ServerCertificate message

- The ServerCertificate message contains the X.509 certificate for the web server at foo.example.com

- The web browser inspects the X.509 certificate

- Is the current date in between the issued date and expiry date of the certificate? If not, display an EXPIRED_CERTIFICATE error.

- Does the domain name the user typed in, foo.example.com, match the domain name in the certificate? If not, display a BAD_CERT_DOMAIN error.

- Does the certificate contain a valid CA signature? (can the signature on the certificate be decrypted by one of the CA Certificates included with the operating system?) If not, display an UNKNOWN_ISSUER error.

- Assuming all the checks pass, the web browser trusts the certificate and connects

This system enabled the internet to grow and flourish: purchasing from a CA was the only way to get a valid X.509 certificate for a website, and guaranteeing authenticity was in the CA's business interest. The CAs kept their private keys behind razor wire and armed guards, and followed strict rules to ensure that only the right people got thier certificates signed. Only the CAs themselves or anyone who had enough power to force them to create a fraudulent certificate would be able to execute MITM attacks.

The TLS+X.509 Certificate Authority works well for HTTP and other application protocols, because

- Most internet users don't have the patience to manually verify the authenticity of digital certificates.

- Most internet users don't understand or care how it works; they just want to connect right now.

- Businesses and organizations that run websites are generally willing to jump through hoops and subjugate themselves to authorities in order to offer a more secure application experience to thier users.

- The centralization & problematic power dynamic which CAs represent is easily swept under the rug, if it doesn't directly or noticably impact the average person, who cares?

However, this would never fly with SSH. You have to understand, SSH does not come from Microsoft, it does not come from Apple, in fact, it does not even come from Linux or GNU. SSH comes from BSD. Berkeley Software Distribution. Most people don't even know what BSD is. It's Deep Nerdcore material. The people who maintain SSH are not playing around, they would never allow themselves to be subjugated by so-called "Certificate Authorities". So, what are they doing instead? Where is SSH at? Well, back when it was created, computer security was easy — a very minimal defense was enough to deter attackers. In order to help prevent these MITM attacks, instead of something like X.509, SSH employs a policy called Trust On First Use (TOFU).

The SSH client application keeps a record of every server it has ever connected to

in a file ~/.ssh/known_hosts.

(the tilde ~ here represents the user's home directory,

/home/username on linux,

C:\Users\username on Windows, and

/Users/username on MacOS).

(Also, note that as the .ssh folder's name starts with a period, it's a "hidden" folder. This just means that your operating system's Graphical User Interface (GUI) will not display it by default.

All operating systems have a way to enable "Show Hidden Files" in the GUI, otherwise you can always access it via the command line).

If the user asks the SSH client to connect to a server it has never seen before,

it will print a prompt like this to the terminal:

The authenticity of host 'fooserver.com (69.4.20.69)' can't be established.

ECDSA key fingerprint is SHA256:EXAMPLE1xY4JUVhYirOVlfuDFtgTbaiw3x29xYizEeU.

Are you sure you want to continue connecting (yes/no/[fingerprint])?

Here, the SSH client is displaying the fingerprint (SHA256 hash)

of the public key provided by the server at fooserver.com.

Back in the day, when SSH was created, servers lived for months to years, not minutes, and they were installed by hand.

So it would have been perfectly reasonable to call the person installing the server on thier Nokia 909 and ask them to log into it & read off the host key fingerprint over the phone.

After verifing that the fingerprints match in the phone call, the user would type yes

to continue.

After the SSH client connects to a server for the first time, it will record the server's IP address and public key in the

~/.ssh/known_hosts file. All subsequent connections will simply check the public key

the server presents against the public key it has recorded in the ~/.ssh/known_hosts file.

If the two public keys match, the connection will continue without prompting the user, however, if they don't match,

the SSH client will display a scary warning message:

@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@

@ WARNING: POSSIBLE DNS SPOOFING DETECTED! @

@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@

The ECDSA host key for fooserver.com has changed,

and the key for the corresponding IP address 69.4.20.42

is unknown. This could either mean that

DNS SPOOFING is happening or the IP address for the host

and its host key have changed at the same time.

@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@

@ WARNING: REMOTE HOST IDENTIFICATION HAS CHANGED! @

@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@

IT IS POSSIBLE THAT SOMEONE IS DOING SOMETHING NASTY!

Someone could be eavesdropping on you right now (man-in-the-middle attack)!

It is also possible that a host key has just been changed.

The fingerprint for the ECDSA key sent by the remote host is

SHA256:EXAMPLEpDDefcNcIROtFpuTiHC1j3iNU74aaKFO03+0.

Please contact your system administrator.

Add correct host key in /root/.ssh/known_hosts to get rid of this message.

Offending ECDSA key in /root/.ssh/known_hosts:1

remove with:

ssh-keygen -f "/root/.ssh/known_hosts" -R "fooserver.com"

ECDSA host key for fooserver.com has changed and you have requested strict checking.

Host key verification failed.

This is why it's called Trust On First Use:

SSH protocol assumes that when you type yes in response to the prompt during your first connection,

you really did verify that the server's public key fingerprint matches.

If you type yes here without checking the server's host key somehow, you could add an attackers public key to the trusted

list in your ~/.ssh/known_hosts file; if you type yes blindly, you are technically vulnerable to a man-in-the-middle attack. Such an attack could silently surviel your connection, inject commands, even emulate / falsify the entire SSH session.

Will anyone actually attack you like that? Probably not, because such an attack would be difficult to hide from someone who knows where to look. Personally, however, I'd rather not fuck around and find out. I'd rather find a way to prove to myself that my first SSH connection to a new server is secure, especially if it's a potentially ephemeral virtual machine like a capsul.

So what are technologists to do? Most cloud providers don't "provide" an easy way to get the SSH host public keys for instances that users create on thier platform. For example, see this question posted by a frustrated user trying to secure thier connection to a digitalocean droplet.

Besides using the provider's HTTPS-based console to log into the machine & directly read the public key, providers also recommend using a "userdata script".

This script would run on boot & upload the machine's SSH public keys to an object storage system like Backblaze B2 or Amazon S3[1], for an application to retrieve later.

As an example, I wrote a userdata script which does this for my own automated VPS management code in greenhouse. Later in the process, greenhouse will download the public keys from the Object Storage provider and add them to the ~/.ssh/known_hosts file before finally invoking the ssh client against the cloud host.

Personally, I think it's disgusting and irresponsible to require users to go through that much work just to be able to connect to their instance securely. However, this practice appears to be an industry standard. It's gross, but it's where we're at right now.

So for capsul, we obviously wanted to do better.

We wanted to make this kind of thing as easy as possible for the user,

so I'm proud to announce as of today, capsul SSH host key fingerprints will be displayed on the capsul detail page,

as well as the host's SSH public keys themselves in ~/.ssh/known_hosts format.

Users can simply copy and paste these keys into thier ~/.ssh/known_hosts file and connect

with confidence that they are not being MITM attacked.

Why ssh more ssh

SSH is a relatively low-level protocol, it should be kept simple and it should not depend on anything external. It has to be this way, because often times SSH is the first service that runs on a server, before any other services or processes launch. SSH server has to run no matter what, because it's what we're gonna depend on to log in there and fix everything else which is broken! Also, SSH has to work for all computers, not just the ones which have internet access or are reachable publically. So, arguing that SSH should be wrapped in TLS or that SSH should use x.509 doesn't make much sense.

ssh didn’t needed an upgrade. SSH is perfect

Because of the case for absolute simplicity, I think that in a cloud based use-case it might even make sense to remove the TOFU and make the ssh client even less user friendly; require the expected host key to be passed in on every command. This could finally remove some of the fine-print from real-world SSH usage and make the protocol easier for the uninitiated to understand. In order to make it human-friendly again while keeping the security benefits, we can create a new layer of abstraction on top of SSH, create regime-specific automation & wrapper scripts.

For example, when we build a JSON API for capsul, we could also provide a capsul-cli

application which contains an SSH wrapper that knows how to automatically grab & inject the authentic host keys and invoke ssh

in a single command.

Cheers and best wishes,

Forest

[1] fuck amazon

Comments