Matrix certainly has its problems, which folks are often quick to criticize:

- The protocol design makes moderation harder than it should be

- There's no "admin panel" for a matrix server; it's administered via CLI

- It has bugs and can be hard to maintain

- IRC users hate it because the matrix IRC bridge was made by matrix people not IRC people, so it ham-fistedly tries to fit matrix content into IRC instead of trying to limit a bridged room to content that IRC supports.

But I think Matrix's benefits outweigh its drawbacks, and I am happy to support the cyberia.club matrix server as its needed.

Disk space has been our single most troublesome problem so far.

For a long time, we kicked the can down the road by increasing the disk space on the VM we use to host our matrix server. But obviously, we can't do this forever.

Upon further investigation, we learned that the postgres database was the one using up all the disk space, and specifically, that one table in the database, called state_groups_state, was responsible for that.

Here is an excerpt from the "Origin Story" on the matrix-synapse-diskspace-janitor repository:

The problem at hand:

Matrix-synapse stores a lot of data that it has no way of cleaning up or deleting.

Specifically, there is a table it creates in the database called state_groups_state:

root@matrix:~# sudo -u postgres pg_dump synapse -t state_groups_state --schema-only

--

-- PostgreSQL database dump

--

...

CREATE TABLE public.state_groups_state (

state_group bigint NOT NULL,

room_id text NOT NULL,

type text NOT NULL,

state_key text NOT NULL,

event_id text NOT NULL

);

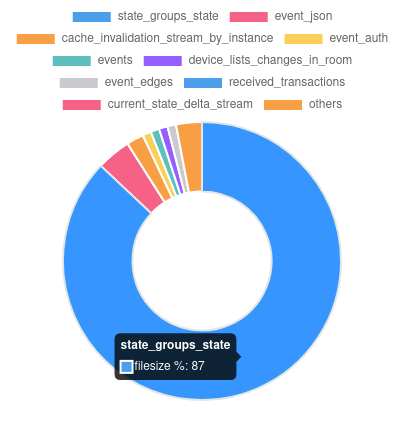

I don't understand what this table is for, however, I can recognize fairly easily that it accounts for the grand majority of the disk space bloat of a matrix-synapse instance:

top 10 tables by disk space used, cyberia.club instance:

So, I think it's safe to say that if we can cut down the size of state_groups_state, then we can solve our disk space issues.

I know that there are other projects dedicated to this, like https://github.com/matrix-org/rust-synapse-compress-state

However, a cursory examination of the data in state_groups_state led me to believe maybe there is an easier and better way.

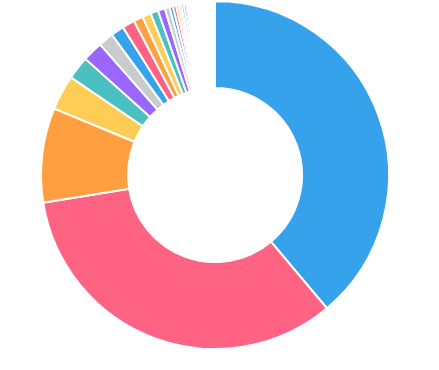

state_groups_state DOES have a room_id column on it. It's not indexed by room_id, but we can still count the # of rows for each room and rank them:

top 100 rooms by number of state_groups_state rows, cyberia.club instance:

In summary, it looks like

about 90% of the disk space used by matrix-synapse is in

state_groups_state, and about 90% of the rows instate_groups_statecome from just a handfull of rooms.

So from this information we have hatched a plan:

Just delete those rooms from our homeserver

However, unfortunately the matrix-synapse delete room API does not remove anything from state_groups_state.

This is similar to the way that the matrix-synapse message retention policies also do not remove anything from state_groups_state.

In fact, probably helps explain why state_groups_state gets hundreds of millions of rows and takes up so much disk space: Nothing ever deletes from it!!

We did come up with some shell scripts to handle this, but it was an annoying recurring manual maintenance burden. Due to the complicated, multi-step nature of the cleanup process, I ended up creating an application to handle it instead of continuing to try to script it. Yes, its probably overkill, but it was fun. And who knows, maybe it can be useful to someone else.

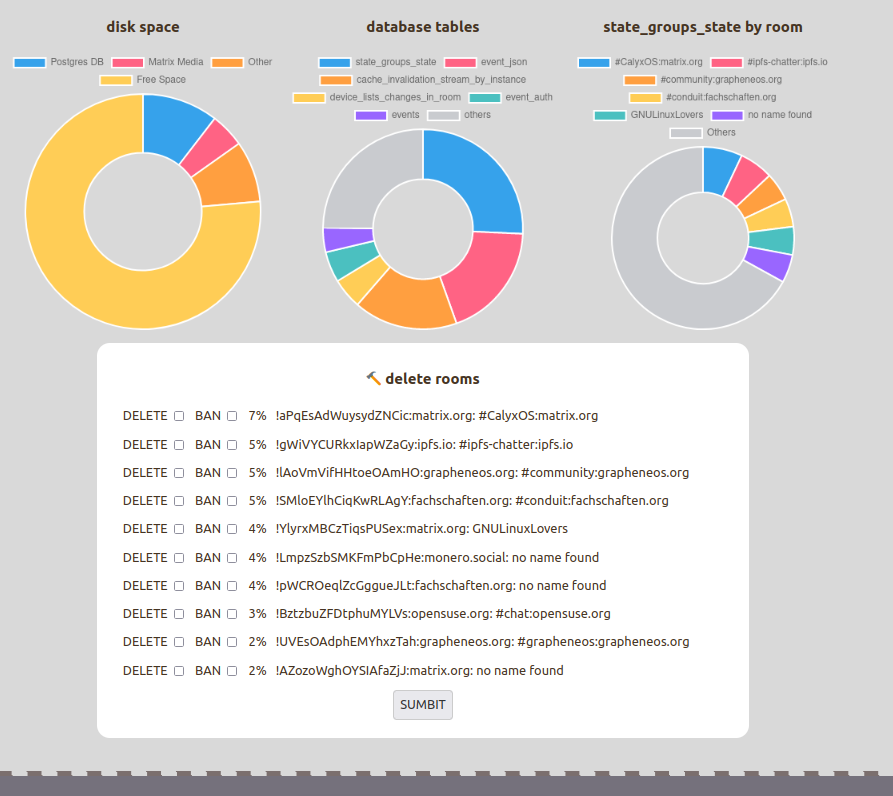

Anyways, this is what it looks like:

You log in with your matrix account:

And then you can see what's using disk space, and if there are any big rooms, you can delete them, including deleting the state_groups_state rows:

Comments